|

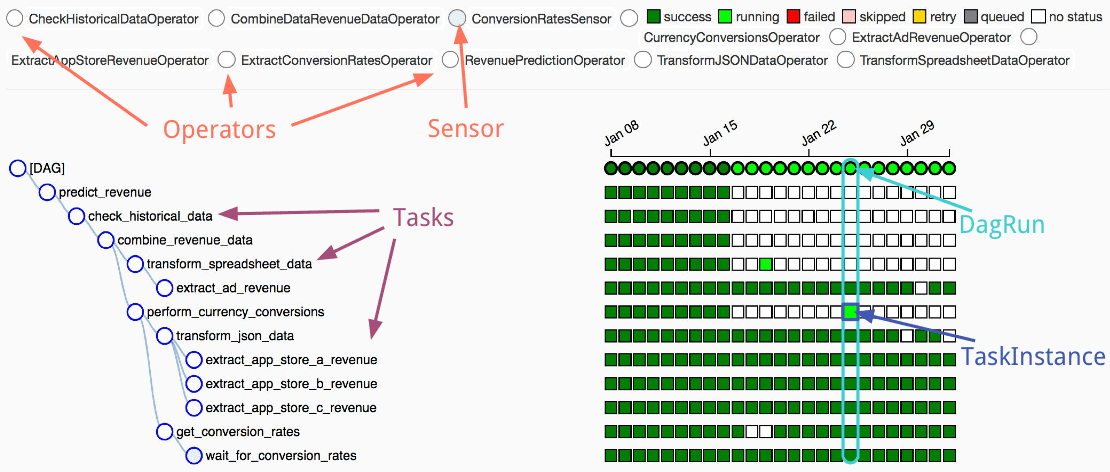

In the old version of Airflow, both the web server and the scheduler required access to the DAG files in order to read and parse them. Processing DAGs on both the web server and the scheduler is rather inefficient due to unnecessary duplication, affecting the overall performance of Airflow negatively. Simply, the scheduler can parse DAG files and keep a representation in the database, so it can later be fetched by the web server to fill the user interface. They are stored in lightweight JSON format. The term refers to storing a serialized representation of the DAGs in the database. Serialization is quite an important functionality of Apache Airflow. In this article, we’d like to focus on the last two changes and explain how they make Airflow 2.0 setup easier. There have been many improvements, for sure, and it is normal that users need some time to get used to them. It is performed in the Airflow UI, so then it does not affect performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed